Medical devices are becoming increasingly pervasive and interconnected, with hospitals averaging 10 to 15 network-connected devices per bed. 90% of hospitals were victims of cyber-attacks in 2014 and 2015, and it cost the healthcare industry $6 billion to address these attacks. Healthcare (unfortunately) is becoming the most victimized across all industries, accounting for 27% of all breaches in the first half of 2016.

It’s important now more than ever to control access to medical devices so only the right people can do the right things with them. Also known as access control, authentication, or identity management, getting cybersecurity wrong can lead to security problems, poor usability, or both.

What kinds of security problems?

- Multiple parties have demonstrated that it is possible to gain control of certain insulin pumps and remotely perform actions such as wake up the insulin pump, start and stop the insulin injection, or immediately inject a bolus dose of insulin into the human body.

- A pacemaker manufacturer recently had to issue patches for a security hole that could allow an attacker access to implants remotely. The attacker was able to issue catastrophic commands like generating shocks or disabling the implant over a wireless network. Unfortunately, the manufacturer sustained a costly hit to its stock price.

- Hackers can exploit insecure medical devices to attack a health facility’s entire network. Separate attacks on two U.S. hospitals took one facility’s computers offline for a week, while another had to notify over 30,000 patients that their records had been deleted and potentially disclosed to the attackers. In addition to higher operating costs, impaired patient care, and having to pay for credit monitoring for affected patients, these facilities also had to pay ransom to the attackers to get their systems back online.

“Just lock it down” doesn’t work.

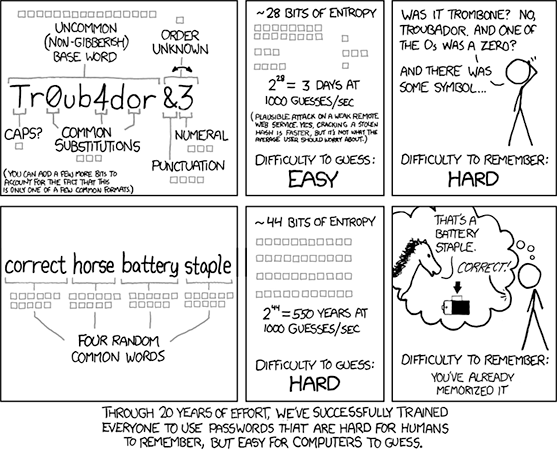

After reading the examples above a medical device developer might be inclined to enforce rigid security requirements, like 20-character complex passwords that must be changed every 7 days. Don’t do this.

An overly complex password can provide a false sense of security and can lead to problems like the following:

- One system timed out every five minutes, requiring the user to log back in. That translated into the clinician spending 1.5 hours of each shift logging in.

- Another system prevented users from logging in if they were already logged in somewhere else. So if a clinician going into surgery discovered she was still logged-in outside the operating room, she’d either have to un-gown or yell for a colleague in the non-sterile area to go log her out.

When users, especially in health care, are faced with obstacles to getting their job done, they get creative in working around those obstacles, making the systems less secure. Researchers looking at this topic found the following workarounds:

- Putting Styrofoam cups over proximity sensors or having a junior team member repeatedly press the spacebar on everyone’s keyboard to prevent timeouts during a procedure.

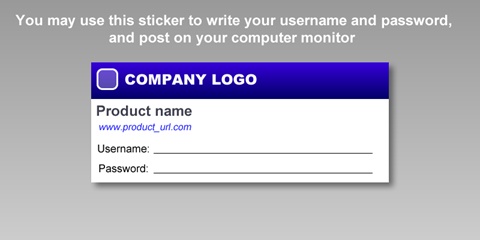

- Sharing passwords to a medical device among entire hospital units by taping the password onto the device.

- Sometimes well-intentioned medical system manufacturers encourage workarounds, like distributing this sticker (branding altered to protect the guilty).

Workarounds like these can cause problems with inadvertent or unintentional exposure of protected health information as well as accidents, such as entering a medication order for the wrong patient, because the previous clinician did not log out properly.

Make it usable AND secure.

Logging in is never a primary user goal; users log in so they can accomplish some other task. It’s essential to make authentication as easy as possible for authorized users while preventing those without permission from using the system. Medical device developers should minimize these distractions so users can focus on necessary tasks.

One place to start is to carefully develop a threat model, analyzing which interactions with a device are higher risk and need securing. For example, knowing that the infusion pump is running or infusion time remaining might be lower risk and not require login, while changing the dose is probably higher risk and needs to be password-protected. This way, the user can still get needed information from the system without having to expend time and effort logging in. Once a user is logged in, they should be able to take “protected” actions for the duration of their login session. Forcing authentication for each individual feature leads to choppy workflows and potentially insecure workarounds.

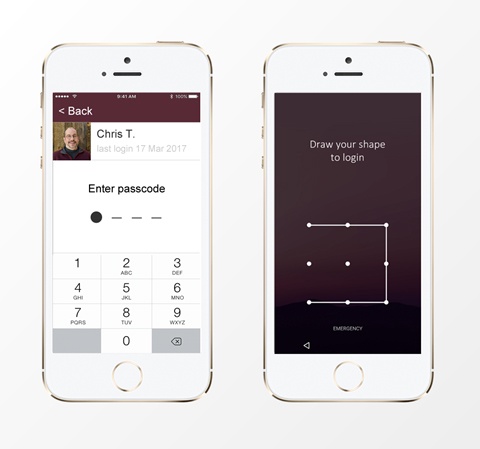

If your system requires users to log in, consider ways to reduce or eliminate the need for them to memorize and enter information. They have enough stuff to remember for their “day job.” Consider the following:

- Authenticating with a badge or fingerprint.

- Show a list of users so people only have to remember their password.

- Simplify the password to a number or shape.

- Minimize or eliminate complex password “recipes”

It’s important to consider everything that could go wrong, and give the user a clear path to resolving problems. As medical device developers, one way we can do this is by providing clear messages for authentication errors, a means of resetting a forgotten password directly on the device, and a way to get help if the user cannot resolve the problem independently.

We also need to consider carefully how and when to end a user’s login session. If the session ends too soon, it could interfere with the user’s work, but waiting too long could leave the session open to misuse by unauthorized and authorized users alike.

Creating usable and secure systems starts with understanding the user’s workflow and adapting the system to it. This is much easier than changing human behavior. We should observe users performing their work in context to answer questions like:

- What information do they need? What actions are they taking?

- How often do they need to interact with the device? For how long?

- What else are they doing? How often are they interrupted?

Conclusion

In the U.S., the Food and Drug Administration is applying increasing scrutiny to cybersecurity, issuing guidance on cybersecurity documentation needs for premarket submissions and maintaining a repository of additional resources. Medical device developers need to consider security throughout the product development process.

In the end, medical products need to address both usability and security concerns to minimize risk, be effective, and achieve market success. Considering them together, rather than separately, will result in a more balanced approach.

Sources and further reading

- http://breachlevelindex.com/

- http://cacm.acm.org/magazines/2016/10/207766-a-brief-chronology-of-medical-device-security/fulltext

- https://articles.uie.com/focusing-on-what-our-users-shouldnt-focus-on/

- https://uxplanet.org/nailing-the-ux-of-authentication-on-mobile-2b69ceab26df#.xepa22tsv

- http://www.cs.dartmouth.edu/~sws/pubs/ksbk15-draft.pdf

- http://ieeexplore.ieee.org/document/6026732/?reload=true

- https://www.wired.com/2017/03/medical-devices-next-security-nightmare